Quantum Reality and Cosmology

Complementarity and Spooky Paradoxes

Genotype 1.0.70 jan 26 - PDF

For significant updates, follow @dhushara on Twitter

Contents

Quantum Theory and Relativity

Quantum Reality

Introduction

This article is designed to give an overview of all the developments in quantum reality and cosmology, from the theory of everything to the spooky properties of quantum reality that may lie at the root of the conscious mind. Along the way, it takes a look at just about every kind of weird quantum effect so far discovered, while managing a description which the general reader can follow without a great deal of former knowledge of the area.

The universe appears to have had an explosive beginning, sometimes called the big bang, in which space and time as well as the material leading to the galaxies were created. The evidence is pervasive, from the increasing red-shift of recession of the galaxy clusters, like the deepening sound of a train horn as the train recedes, to the existence of cosmic background radiation, the phenomenally stretched and cooled remnants of the original fireball. The cosmic background shows irregularities of the early universe at the time radiation separated from matter when the first atoms formed from the flux of charged particles. From a very regular symmetrical 'isotropic ' beginning for such an explosion, these fluctuations, which may be of a quantum nature, have become phenomenally expanded and smoothed to the scale of galaxies consistent with a theory called inflation. The large-scale structure of the universe in our vicinity, out to a billion light years surrounding the Milky Way, our super-cluster Laneakea, and even larger structures, including the Shapley Atractor and dipole Repeller, shaped by variations in dark matter, as in the MIllennium simulation are shown in Fig 1.

Fig 2:(a) The cosmic background - a red-shifted primal fireball (WMAP). This radiation separated from matter, as charged plasma condensed to atoms. The fluctuations are smoothed in a manner consistent with subsequent inflation. (b) Eternal inflation and big bounce models. Fractal inflation model leaves behind mature universes while inflation continues endlessly. Big crunch leads to a new big(ger) bang. (c) Darwin in Eden: "Paradise on the cosmic equator. " - life is an interactive complexity catastrophe consummating in intelligent organisms, resulting ultimately from force differentiation. This summative Σ interactive state is thus cosmological and as significant as the α of its origin and Ω of the big crunch or heat death in endless expansion.

Fig 2:(a) The cosmic background - a red-shifted primal fireball (WMAP). This radiation separated from matter, as charged plasma condensed to atoms. The fluctuations are smoothed in a manner consistent with subsequent inflation. (b) Eternal inflation and big bounce models. Fractal inflation model leaves behind mature universes while inflation continues endlessly. Big crunch leads to a new big(ger) bang. (c) Darwin in Eden: "Paradise on the cosmic equator. " - life is an interactive complexity catastrophe consummating in intelligent organisms, resulting ultimately from force differentiation. This summative Σ interactive state is thus cosmological and as significant as the α of its origin and Ω of the big crunch or heat death in endless expansion.

Origin of Time and Space: In special relativity, the space-time interval (3)![]() can be expressed either (left) in terms of real time in Minkowski space in which the interval is independent of the inertial frame of reference under the Lorenz transformations of special relativity, or equivalently (right) in terms of imaginary time in ordinary Euclidean 4-D space. These two are generalized in higher spatial dimensions into anti-De Sitter and De Sitter space respectively (see fig 3(b)). Hartle and Hawking suggest that if we could travel backward in time toward the beginning of the Universe, we would note that quite near what might have otherwise been the beginning, time gives way to space such that at first there is only space and no time. Beginnings are entities that have to do with time; because time did not exist before the Big Bang, the concept of a beginning of the Universe is meaningless. According to the Hartle-Hawking proposal, the Universe has no origin as we would understand it: the Universe was a singularity in both space and time, pre-Big Bang. Thus, the Hartle-Hawking state Universe, or its wave function, has no beginning - it simply has no initial boundaries in time nor space, rather like the south pole of the Earth in Euclidean space with imaginary time, but becomes a singularity in Minkowsi space in real time. According to the theory, time diverged from a three-state dimension after the Universe was at the age of Planck time

can be expressed either (left) in terms of real time in Minkowski space in which the interval is independent of the inertial frame of reference under the Lorenz transformations of special relativity, or equivalently (right) in terms of imaginary time in ordinary Euclidean 4-D space. These two are generalized in higher spatial dimensions into anti-De Sitter and De Sitter space respectively (see fig 3(b)). Hartle and Hawking suggest that if we could travel backward in time toward the beginning of the Universe, we would note that quite near what might have otherwise been the beginning, time gives way to space such that at first there is only space and no time. Beginnings are entities that have to do with time; because time did not exist before the Big Bang, the concept of a beginning of the Universe is meaningless. According to the Hartle-Hawking proposal, the Universe has no origin as we would understand it: the Universe was a singularity in both space and time, pre-Big Bang. Thus, the Hartle-Hawking state Universe, or its wave function, has no beginning - it simply has no initial boundaries in time nor space, rather like the south pole of the Earth in Euclidean space with imaginary time, but becomes a singularity in Minkowsi space in real time. According to the theory, time diverged from a three-state dimension after the Universe was at the age of Planck time  , the time required for light to travel in a vacuum a distance of 1 Planck length

, the time required for light to travel in a vacuum a distance of 1 Planck length ![]() , or approximately 5.39 x 10-44 s. Because the Planck time comes from dimensional analysis, to produce a factor with the dimensionality of time from fundamental units, which ignores constant factors, the Planck length and time represent a rough scale at which quantum gravitational effects are likely to become important. Also since the universe was finite and without boundary in its beginning, according to Hawking, it should ultimately contract again.

, or approximately 5.39 x 10-44 s. Because the Planck time comes from dimensional analysis, to produce a factor with the dimensionality of time from fundamental units, which ignores constant factors, the Planck length and time represent a rough scale at which quantum gravitational effects are likely to become important. Also since the universe was finite and without boundary in its beginning, according to Hawking, it should ultimately contract again.

The Holographic Principle, Entanglement, Space-Time and Gravity

Two forms of evidence link quantum entanglement to cosmological processes that may involve gravity and the structure of space-time. The holographic primciple asserts that in a variety of unified theories, an n-D theory can be holographically represented by the physics of a corresponding (n-1)-D theory on a surface enclosing the region.

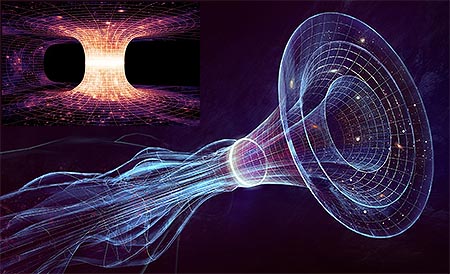

Fig 3: (a) An illustration of the holographic principle in which physics on the 3D interior of a region, involving gravitational forces represented as strings, is determined by a 2D holographic representation on the boundary in terms of the physics of particle interactions. This correspondence has been successfully used in condensed matter physics to represent the transition to superconductivity, as the dual of a cooling black hole's "halo" (Merali 2011), Sachdev arXiv:1108.1197) (b) Holographic principle explained. Einstein's field equations can be represented on anti-de Sitter space, a space similar to hyperbolic geometry, where there is an infinite distance from any point to the boundary. This 'bulk' space can also be thought of as a tensor network as in (c). In (1998) Juan Maldacena discovered a 1-1 correspondence between the gravitational tensor geometry in this space with a conformal quantum field theory like standard particle field theories on the boundary. A particle interaction in the volume would be represented as a more complex field interaction on the boundary, just as a hologram can generate a complex 3D image from wavefront information on a 2D photographic plate (Cowen 2015). The holographic principle can be used to generate dualities between higher dimensional string theories and more tractable theories that avoid the infinities that can arise when we try to do the analogue of Feynman diagrams to do perturbation theory calculations in string theory. (c) Entanglement plays a pivotal role because whan the entanglement between two regions on the boundary is reduced to zero, the bulk space pinches off and separates into two regions. (d) In an application to cosmology, entanglement on the horizon of black holes may occur if and only if a wormhole in space-time connects their interiors. Einstein and Rosen addressed both worm-holes and the pair-splitting EPR experiment. Juan Maldacena sent colleague Leonard Susskind the cryptic message ER=EPR outlining the root idea that entanglement and worm-holes were different views of the same phenomenon (Maldacena and Susskind 2013, Ananthaswamy 2015). (e) Time may itself be an emergent property of quantum entanglement (Moreva et al. 2013). An external observer (1) sees a fixed correlated state, while an internal observer using one particle of a correlated pair as a clock (2) sees the quantum state evolving through two time measurements using polarization-rotating quartz plates and two beam splitters PBS1 and PBS2.

Fig 3: (a) An illustration of the holographic principle in which physics on the 3D interior of a region, involving gravitational forces represented as strings, is determined by a 2D holographic representation on the boundary in terms of the physics of particle interactions. This correspondence has been successfully used in condensed matter physics to represent the transition to superconductivity, as the dual of a cooling black hole's "halo" (Merali 2011), Sachdev arXiv:1108.1197) (b) Holographic principle explained. Einstein's field equations can be represented on anti-de Sitter space, a space similar to hyperbolic geometry, where there is an infinite distance from any point to the boundary. This 'bulk' space can also be thought of as a tensor network as in (c). In (1998) Juan Maldacena discovered a 1-1 correspondence between the gravitational tensor geometry in this space with a conformal quantum field theory like standard particle field theories on the boundary. A particle interaction in the volume would be represented as a more complex field interaction on the boundary, just as a hologram can generate a complex 3D image from wavefront information on a 2D photographic plate (Cowen 2015). The holographic principle can be used to generate dualities between higher dimensional string theories and more tractable theories that avoid the infinities that can arise when we try to do the analogue of Feynman diagrams to do perturbation theory calculations in string theory. (c) Entanglement plays a pivotal role because whan the entanglement between two regions on the boundary is reduced to zero, the bulk space pinches off and separates into two regions. (d) In an application to cosmology, entanglement on the horizon of black holes may occur if and only if a wormhole in space-time connects their interiors. Einstein and Rosen addressed both worm-holes and the pair-splitting EPR experiment. Juan Maldacena sent colleague Leonard Susskind the cryptic message ER=EPR outlining the root idea that entanglement and worm-holes were different views of the same phenomenon (Maldacena and Susskind 2013, Ananthaswamy 2015). (e) Time may itself be an emergent property of quantum entanglement (Moreva et al. 2013). An external observer (1) sees a fixed correlated state, while an internal observer using one particle of a correlated pair as a clock (2) sees the quantum state evolving through two time measurements using polarization-rotating quartz plates and two beam splitters PBS1 and PBS2.

The Holographic Principle: A collaboration between physicists and mathematicians has made a significant step toward unifying general relativity and quantum mechanics by explaining how spacetime emerges from quantum entanglement in a more fundamental theory. The holographic principle states that gravity in say a three-dimensional volume can be described by quantum mechanics on a two-dimensional surface surrounding the volume. The process applies generaly to anti-de Sitter spaces modelling gravitation in n-dimensions and conformal field theories in (n-1)-dimensions and plays a central role in decoding string and M-theories. Juan Maldacena's (1998) paper has become the most cited one in theoretical physics, with over 7000 citations. Now the researchers have found that quantum entanglement is the key to solving this question. Using a quantum theory (that does not include gravity), they showed how to compute the energy density, which is a source of gravitational interactions in three dimensions, using quantum entanglement data on the surface. This allowed them to interpret universal properties of quantum entanglement as conditions on the energy density that should be satisfied by any consistent quantum theory of gravity, without actually explicitly including gravity in the theory (Lin et al. 2015).

In a second experimental investigation, working directly with quantum entangled states, (Moreva et al. 2013) time itself was found to be be an emergent property of quantum entanglement. In the experiment, an external observer sees time being fixed throughout, while an observer using one particle in and entanglement as a clock percieves time as evolving (fig 3(e)).

The holographic principle, otherwise known as the anti-de Sitter/conformal field theory (AdS/CFT) correspondence, has since been found to imply several conjectures when applied to combine gravity and quantum mechanics - that no global symmetries are possible, that internal gauge symmetries must come with dynamical objects that transform in all irreducible representations, and that internal gauge groups must be compact (Harlow & Ooguri 2019). Their previous work had found a precise mathematical analogy between the holographic principle and quantum error correcting codes, which protects information in a quantum computer. In the new paper, they showed such quantum error correcting codes are not compatible with any symmetry, meaning that symmetry would not be possible in quantum gravity. This result has several important consequences. It predicts for example that the protons are stable against decaying into other elementary particles, and that magnetic monopoles exist.

Fig 4: Above: Sketch of the timeline of the holographic Universe. Time runs from left to right. The far left denotes the holographic phase and the image is blurry because space and time are not yet well defined. At the end of this phase (denoted by the black fluctuating ellipse) the Universe enters a geometric phase, which can now be described by Einstein's equations. The cosmic microwave background was emitted about 375,000 years later. Patterns imprinted in it carry information about the very early Universe and seed the development of structures of stars and galaxies in the late time Universe (far right). Below: Angular power spectrum of CMB anisotropies, comparing Planck 2015 data with best fit ΛCDM (dotted blue curve) and holographic cosmology (solid red curve) models, for l ≥ 30.

Fig 4: Above: Sketch of the timeline of the holographic Universe. Time runs from left to right. The far left denotes the holographic phase and the image is blurry because space and time are not yet well defined. At the end of this phase (denoted by the black fluctuating ellipse) the Universe enters a geometric phase, which can now be described by Einstein's equations. The cosmic microwave background was emitted about 375,000 years later. Patterns imprinted in it carry information about the very early Universe and seed the development of structures of stars and galaxies in the late time Universe (far right). Below: Angular power spectrum of CMB anisotropies, comparing Planck 2015 data with best fit ΛCDM (dotted blue curve) and holographic cosmology (solid red curve) models, for l ≥ 30.

Holographic Origin A class of holographic models for the very early Universe (Afshordi et al. 2017) based on three-dimensional perturbative super-renormalizable quantum field theory (QFT) has been tested against cosmological microwave background observations and found that they are competitive to the infationary standard cold dark matter model with a cosmological constant (ΛCDM) of cosmology.

Inflation, Dark Matter and Dark Energy

Cosmic Inflation: Alan Guth and Alexei Starobinsky proposed in 1980 that a negative pressure field, similar in concept to dark energy, could drive cosmic inflation in the very early universe - a repulsive force, qualitatively similar to dark energy, resulting in an enormous and exponential expansion of the universe just after the Big Bang but at a much higher energy density than the dark energy we observe today and is thought to have completely ended when the universe was just a fraction of a second old. It is unclear what relation, if any, exists between dark energy and inflation. Nearly all inflation models predict that the total (matter + energy) density of the universe should be very close to the critical density. The evidence from the early universe indicates that there was not simply an explosive beginning in a big bang but an extremely rapid exponential inflation of the universe in the first 10-35 sec into essentially the huge expanding universe we see today.

Since dark energy and dark matter dominate the cosmological mass-energy equation, interest is now focusing on dark inflation as being better capable of modelling and picturing the earliest phases of the universe, such as the inflationary period, whose energy parameters and precise dynamics remain highly uncertain. The range of energies at which inflation could have occurred is vast, stretching over 70 orders of magnitude. In order to recreate the observed dominance of radiation in the Universe, inflatons should lose energy rapidly. The researchers propose two physical mechanisms which could be responsible for the process. They reveal that the new model predicts the course of events of the Universe's thermal history with a far greater accuracy than previously. If inflation involved the dark sector, the input of gravitational waves increased proportionally. This means that traces of the primordial gravitational waves are not as weak as originally thought. Data suggests that primordial gravitational waves could be detected by observatories currently at the design stage or under construction (Artymowski M et al. 2018 doi:10.1088/1475-7516/2018/04/046). The stability of the earliest phase from immediate gravitational collapse also appears to be dependent on the relation between gravity and the Higgs' field in the inflationary epoch (Herranen M et al. 2018 Phys. Rev. Lett. 113, 211102).

Fig 4b: Dark inflation gives a more presice description of the inflationary period based on the dominant mass-energy components of th euniverse which predicts gravitational waves in existing pulsars (circled far right), which may soon be able to be detected by a new generation of instruments. Data suggests that primordial gravitational waves could be detected by observatories currently at the design stage or under construction, such as the Deci-Hertz Interferometer Gravitational Wave Observatory (DECIGO), Laser Interferometer Space Antenna (LISA), European Pulsar Timing Array (EPTA) and Square Kilometre Array (SKA). The first events could be detected in the coming decade.

In some 'eternal inflation' models the inflation is fractal, leaving behind mature 'bubble' universes while inflation continues unabated (fig 2(b)). The inflationary model explains the big bang neatly in terms of the same process of symmetry-breaking which caused the four forces of nature, gravity, electromagnetism and the weak and strong nuclear forces to become so different from one another. The large-scale cosmic structure is thus related to the quantum scale in one logical puzzle. In this symmetry-breaking the universe adopted its very complex 'twisted ' form which made hierarchical interaction of the particles to form protons and neutrons, and then atoms and finally molecules and complex molecular life possible. We can see this twisted nature in the fact that all the charges in the nucleus are positive or neutral protons and neutrons while the electrons orbiting an atom are all negatively charged.. Some theories model inflation on the idea of a scalar field and some of these consider that the Higgs particle may itself be the source of the hypothetical inflaton generating this field (arXiv:1011.4179).

Symmetry-breaking is a classic example of engendering at work. Cosmic inflation explains why the universe seems to have just about enough energy to fly apart into space and no more, and why disparate regions of the universe which seemingly couldn't have communicated since the big-bang at the speed of light, seem to be so regular. Inflation ties together the differentiation of the fundamental forces and an exponential expansion of the universe based on a form of anti-gravity which exists only until the forces break their symmetry. Inflation explains galactic clusters as phenomenally inflated quantum fluctuations and suggests that our entire universe may have emerged from its own wave function in a quantum fluctuation. However more recent modeling suggests that, due to these quantum effects, inflation can lead to a multiverse where the universe breaks up into an infinite number of patches, which explore all conceivable properties as you go from patch to patch. Hence we shall investigate other models such as the ekpyrotic scenario which also predict a smoothed out universe. On the other hand the latest data from the Planck satelitte does favour the simplest models of inflation, in which the size of temperature fluctuations is, on average, the same on all distance scales (doi:10.1038/nature.2014.16462).

Our view of the distant parts of the universe, which we see long ago because of the time light has taken to reach us, likewise confirm a different more energetic galactic early life. We can look out to the limits of the observable universe and because of the long delay which light takes to cross such a vast region, witness quasars and early energetic galaxies, which are quite different from mature galaxies such as our own milky way.

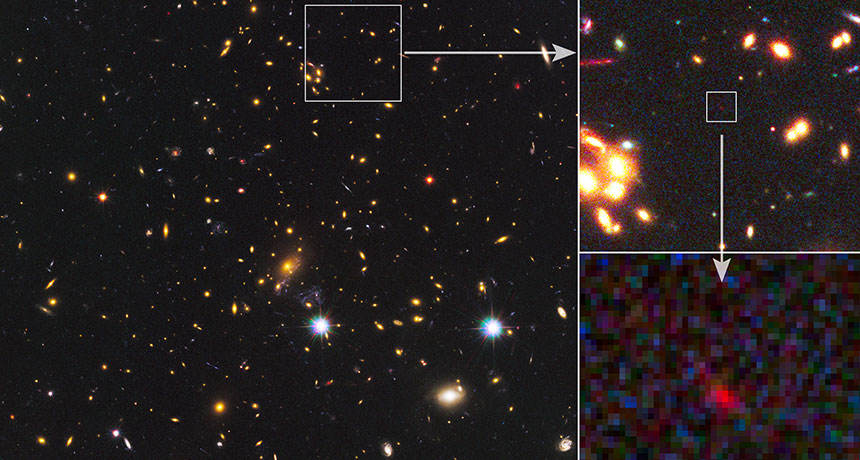

Fig 5: Researchers used instruments at the Atacama Large Millimeter/submillimeter Array observatory in Chile to observe light emitted in a galaxy called MACS1149-JD1, one of the farthest light sources visible from Earth. The emissions are a clue to the galaxy's redshift, detected in an emission line of doubly ionized oxygen at a redshift of 9.1096 ± 0.0006. The galaxy's redshift suggests that the starlight was emitted when the universe was about 550 million years old, but many of those stars were already about 300 million years old, further calculations indicate. That finding suggests that the stars would have blinked into existence some 250 million years after the universe's birth (Hashimoto doi:10.1038/s41586-018-0117-z).

Early Evolution: As shown in fig 7, the evolution of the early universe from the end of the hypothesized inflationary period begins with the cosmic background radiation emitted when light became separated from neutral matter when the charged plasma coupling with light condensed to form neutral atoms. This led initially to a dark age of a few hundred million years before the first galaxies formed and stars began to shine. The evidence from fig 5 implies that the first stars were radiating from as early as 250 mya after the cosmic origin. Other research from radio frequencies (SN: 3/31/18 p6) suggests star formation began as early as 180 mya.

Reheating: The link between the inflationary phase and the view we have of the hot particulate origin of the expanding universe in the Big Bang is called "reheating". The earliest phases of reheating should be marked by resonances. One form of high-energy matter dominates, and it's shaking back and forth in sync with itself across large expanses of space, leading to explosive production of new particles, the resonant effect to break up, and for the produced particles to scatter off each other and come to some sort of thermal equilibrium, reminiscent of Big Bang conditions.The scientists chose a model of inflation whose predictions closely match high-precision measurements of the cosmic microwave background emitted 380,000 years after the Big Bang, which is thought to contain traces of the inflationary period. The simulation tracked the behaviour of two types of matter that may have been dominant during inflation, very similar to a type of particle, the Higgs boson, that was recently observed in other experiments. Matter at very high energies, were modelled as interacting with gravity in ways that are modified by quantum mechanics at the atomic scale. Quantum-mechanical effects predict that the strength of gravity can vary in space and time when interacting with ultra-high-energy matter -- non-minimal coupling. They found that the stronger the quantum-modified gravitational effect was in affecting matter, the faster the universe transitioned from the cold, homogeneous matter in inflation to the much hotter, diverse forms of matter that are characteristic of the Big Bang. By tuning this quantum effect, they could make this crucial transition take place over 2 to 3 "e-folds," referring to the amount of time it takes for the universe to (roughly) triple in size. In this case, they managed to simulate the reheating phase within the time it takes for the universe to triple in size two to three times. By comparison, inflation itself took place over about 60 e-folds (Nguyen et al. 2019).

Ultimate fate: The eventual fate of the universe is less certain, because it's rate of expansion brings it very close to the limiting condition between the gravitational attraction of the mass energy it contains ultimately reversing the expansion, causing an eventual collapse, and continued expansion forever. The evidencehas been in favour of a perpetual and possibly accelerating expansion and astronomers are seeking an explanation for this apparent lack of mass in dark matter and a dark energy, called 'quintessence' in some of its more varying forms, promoting accelerating expansion that may vary over time. Dark energy has caused the original expansion of the universe to accelerate, but now appears to be slowing, leaving the ultimate fate of the universe uncertain between a heat death and a big crunch (DESI 2025, Son et al 2025).

The missing mass is clearly evident in close galaxies, which spin so rapidly they would fly apart if the only matter present was the luminous matter of stars, black holes and gaseous nebulae. WMAP and Planck data now suggest the universe's rate of expansion has increased part way through its lifetime and that its large-scale dynamics are governed mostly by dark energy (68.3%), with successively smaller contributions from dark matter (26.8%) and ordinary galactic matter and radiation (4.9%). From the time of the cosmic microwave background radiation (CMB), dark matter comprised 63% of the matter, photons 15%, atoms 12% and neutrinos 10%, but because photons have zero rest mass, and the CMB is full of low energy photons, the particle ratio is about 109 photons for each proton or neutron.

Fig 6: (left) SN 2011fe, a type 1a supernova 21 million light-years away in galaxy M101 discovered in 2011, shown in before and after images of the galaxy. Theoretical models for the current expansion rate taking into account normal and dark matter and dark energy using cosmic microwave background data infer a Hubble constant of 67, but current measurements of supernovae put the figure at 73 or 74 based on actually measuring the expansion, by analyzing how the light from distant supernova explosions has dimmed over time. Explanations vary from quintessence models of an actual field rather than a cosmological constant, through additional neutrino types to relativistic particle moving close to light speed, or interactions between dark matter and radiationin the early universe. (right) Discrepancies between early and late measurements of the Hubble constant..

Dark energy: A type 1a supernova occurs in binary systems in which one of the stars is a white dwarf, which gradually accretes mass from its companion, which can be anything from a giant star to an even smaller white dwarf, until its core reaches the ignition temperature for carbon fusion. Within a few seconds of initiation of nuclear fusion, a substantial fraction of the matter in the white dwarf undergoes a runaway reaction, releasing enough energy to unbind the star in a supernova explosion. This process produces consistent peak luminosity because of the uniform mass of the white dwarfs that explode via the accretion mechanism. The stability of this value allows these explosions to be used as standard candles to measure the distance to their host galaxies because the visual magnitude of the supernovae depends primarily on the distance. In 1998 two separate teams noted that these distant supernovae were much dimmer than they should be. The simplest and most logical explanation is that the expansion of the universe is now accelerating by comparision with measures of the earlier universe such as the cosmic microwave background (arXiv:astro-ph/9805201, arXiv:astro-ph/9812133).

Fig 7: Left: Cosmic history including inflation and dark energy. Right: After stars formed in the early Universe, their ultraviolet light is expected, to have penetrated the primordial hydrogen gas and altered the excitation state of its 21-centimetre hyperfine line, causing the gas to absorb photons from the cosmic microwave background, producing a distortion at radio frequencies of less than 200 mHz. The latest onset of the cosmic dawn is estimated to be 180 million years after the Big Bang. The signal's disappearance gives away a second milestone – when more-energetic X-rays from the deaths of the first stars raised the temperature of the gas and turned off the signal – around 250 million years after the Big Bang. The strength suggests that either there was more radiation than expected in the cosmic dawn, or the gas was cooler than predicted. That points to dark matter, which theories suggest should have been cold in the cosmic dawn. The results suggest dark matter should be lighter than the current theory indicates. This could help to explain why physicists have failed to observe dark matter directly (doi:10.1038/nature25792).

Fig 7: Left: Cosmic history including inflation and dark energy. Right: After stars formed in the early Universe, their ultraviolet light is expected, to have penetrated the primordial hydrogen gas and altered the excitation state of its 21-centimetre hyperfine line, causing the gas to absorb photons from the cosmic microwave background, producing a distortion at radio frequencies of less than 200 mHz. The latest onset of the cosmic dawn is estimated to be 180 million years after the Big Bang. The signal's disappearance gives away a second milestone – when more-energetic X-rays from the deaths of the first stars raised the temperature of the gas and turned off the signal – around 250 million years after the Big Bang. The strength suggests that either there was more radiation than expected in the cosmic dawn, or the gas was cooler than predicted. That points to dark matter, which theories suggest should have been cold in the cosmic dawn. The results suggest dark matter should be lighter than the current theory indicates. This could help to explain why physicists have failed to observe dark matter directly (doi:10.1038/nature25792).

Soon after, dark energy was supported by independent observations: in 2000, the BOOMERanG and Maxima cosmic microwave background experiments observed the first acoustic peak in the CMB, showing that the total (matter+energy) density is close to 100% of critical density. Then in 2001, the 2dF Galaxy Redshift Survey gave strong evidence that the matter density is around 30% of critical. The large difference between these two supports a smooth component of dark energy making up the differenceof some 70%.

Dark energy is poorly understood at a fundamental level, the main required properties are that it functions as a type of anti-gravity, it dilutes much more slowly than matter as the universe expands, and it clusters much more weakly than matter, or perhaps not at all.

The cosmological constant Λ (see equation 5), is the simplest possible form of dark energy since it is constant in both space and time, and this leads to the current standard ΛCDM model of cosmology, involving the cosmological constant Λ and cold dark matter. It is frequently referred to as the standard model of Big Bang cosmology, because it is the simplest model that provides a reasonably good account of (1) the cosmic microwave background, (2) the large-scale structure of the galaxies, (3) the abundances of hydrogen (including deuterium), helium, and lithium and (4) the accelerating expansion of the universe.

Antimatter gravity could also provide an explanation for the Universe's expansion if significant amounts of anti-matter can be found. The current formulation of general relativity predicts that matter and antimatter are both self-attractive, yet matter and antimatter mutually repel each other. CPT symmetry means that, in order to transform a physical system of matter into an equivalent antimatter system (or vice versa) described by the same physical laws, not only must particles be replaced with corresponding antiparticles (C operation), but an additional PT transformation is also needed. From this perspective, antimatter can be viewed as normal matter that has undergone a complete CPT transformation, in which its charge, parity and time are all reversed. Even though the charge component does not affect gravity, parity and time affect gravity by reversing its sign. So although antimatter has positive mass, it can be thought of as having negative gravitational mass, since the gravitational charge in the equation of motion of general relativity is not simply the mass, but includes a factor that is PT-sensitive and yields the change of sign. CPT symmetry means that antimatter basically exists in an inverted spacetime – the P operation inverts space, and the T operation inverts time (Villata 2011).

Quintessence is a model of dark energy in the form of a scalar field forming a fifth force of nature that changes over time, unlike the cosmological constant, which always stays fixed. It could be either attractive or repulsive, depending on the ratio of its kinetic and potential energy. Quintessance theories, which combine standard model constraints with a dark energy field, may help to provide a real contstraint which might enable string theories to be physically tested and to reduce their vast number of possible universes with differing laws of nature to the ones we experience (arXiv:1806.08362). The scalar field of the Higgs boson would appear to create a contradiction with quintessence constraints forbiding scalar field critical points if the two interacted unless theydo so in a particular way which would be physically identifiable (doi:10.1103/PhysRevD.98.086004).

Quintessence is a model of dark energy in the form of a scalar field forming a fifth force of nature that changes over time, unlike the cosmological constant, which always stays fixed. It could be either attractive or repulsive, depending on the ratio of its kinetic and potential energy. Quintessance theories, which combine standard model constraints with a dark energy field, may help to provide a real contstraint which might enable string theories to be physically tested and to reduce their vast number of possible universes with differing laws of nature to the ones we experience (arXiv:1806.08362). The scalar field of the Higgs boson would appear to create a contradiction with quintessence constraints forbiding scalar field critical points if the two interacted unless theydo so in a particular way which would be physically identifiable (doi:10.1103/PhysRevD.98.086004).

Fig 7b: Claudia de Rham. In the latest acknowledgement of her breakthrough, she received the Blavatnik Award for Young Scientists, two years after winning the Adams prize, one of the University of Cambridge's oldest and most prestigious awards.

Chameleon Particle is a hypothetical scalar particle that couples to matter more weakly than gravity,postulated as a dark energy candidate. Due to a non-linear self-interaction, it has a variable effective mass which is an increasing function of the ambient energy density—as a result, the range of the force mediated by the particle is predicted to be very small in regions of high density (for example on Earth, where it is less than 1mm) but much larger in low-density intergalactic regions: out in the cosmos chameleon models permit a range of up to several thousand parsecs. As a result of this variable mass, the hypothetical fifth force mediated by the chameleon is able to evade current constraints on equivalence principle violation derived from terrestrial experiments even if it  couples to matter with a strength equal or greater than that of gravity. Although this property would allow the chameleon to drive the currently observed acceleration of the universe's expansion, it also makes it very difficult to test for experimentally. It has been proposed in realistic models of galaxy formation (Realistic simulations of galaxy formation in f(R) modified gravity Nature Astronomy doi:10.1038/s41550-019-0823-y) and could be produced in the medial tachocline layers of the Sun through magnetic fields interacting with photons and has been putatively detected in the XENON1T dark matter experiment (arXiv 2103.15834).

couples to matter with a strength equal or greater than that of gravity. Although this property would allow the chameleon to drive the currently observed acceleration of the universe's expansion, it also makes it very difficult to test for experimentally. It has been proposed in realistic models of galaxy formation (Realistic simulations of galaxy formation in f(R) modified gravity Nature Astronomy doi:10.1038/s41550-019-0823-y) and could be produced in the medial tachocline layers of the Sun through magnetic fields interacting with photons and has been putatively detected in the XENON1T dark matter experiment (arXiv 2103.15834).

Fig 7c: Above galaxy formation models. Below XENON1t detections and chamelion model of solar emission.

Massive Gravity: If gravitons have a mass, then gravity is expected to have a weaker influence on very large distance scales, which could explain dark energy and why the expansion of the universe has not been reined in. Claudia deRham's work (de Rham, Gabadadze & Tolley 2011, de Rham 2014, de Rham et al. 2017 ) marks a breakthrough in a century-long quest to build a working theory of massive gravity. Despite successive efforts, previous versions of the theory had the unfortunate feature of predicting the instantaneous decay of every particle in the universe -- an intractable issue that mathematicians refer to as a "ghost". In 2011, De Rham and her collaborators published their landmark paper on massive gravity, the response was initially swift and hostile, due to the possible presence of ghosts in their theory, but after 8 years, the theory has stood up and is gaining traction. A discussion of these issues can be found in Merali (2013).

Particle physics predicts the existence of vacuum energy which could explain dark energy, but also asserts that it should be 10120 times larger than what is needed to explain the dark force acceleration observed by astronomers. Gravity is long-range because we feel gravity from the Sun. However, if the graviton had a tiny mass of less than 10-33 eV, it would still fit with all astronomical observations. (Neutrinos have masses of the order of 1 eV, and the electron has a mass of about 511,000 eV). A mass-carrying graviton would swallow up almost all of the vacuum's energy, leaving behind just a small fraction as dark energy to cause the Universe to accelerate outwards. Such experiments could soon be carried out within the Solar System, because massive-gravity models predict a gravitational field between Earth and the Moon that is slightly different to that of the Sun. This would create a detectable difference of one part in 1012 in the precession of the Moon's orbit around Earth. Experiments that fire lasers back and forth between Earth and mirrors left on the Moon currently measure the distance between the two bodies and that angle with an accuracy of one part in 1011.

Dark matter/energy: A model of dark energy emerging as a repulsive magnetic effect of dark matter has also been proposed (Loeve, Nielsen & Hansen 2021).

Fig 8: (Left) accelerating expansion with supernova and cosmic background measures. (Right) Phantom dark energy picute of the Big Rip. Cosmologists estimate that the acceleration began roughly 5 billion years ago. Before that, it is thought that the expansion was decelerating, due to the attractive influence of dark matter and baryons. The density of dark matter in an expanding universe decreases and eventually the dark energy dominates. When the volume of the universe doubles, the density of dark matter is halved, but the density of dark energy is constant for a cosmological constant and changes only slowly otherwise.

The changing rate of expansion of the universe is described by the equation of state constant  where p is the pressure and ρ is the energy density. Einstein's field equations (5) have an exact solution in the form of the Friedmann-Lemaitre-Robertson-Walker (FLRW) metric describing the scale a of a homogeneous, isotropic expanding or contracting universe that is path connected, but not necessarily simply connected:

where p is the pressure and ρ is the energy density. Einstein's field equations (5) have an exact solution in the form of the Friedmann-Lemaitre-Robertson-Walker (FLRW) metric describing the scale a of a homogeneous, isotropic expanding or contracting universe that is path connected, but not necessarily simply connected: . If we examine the "effective" pressure and energy density:

. If we examine the "effective" pressure and energy density: , we can see that

, we can see that  (6), where

(6), where  . This shows us the underlying relationship between the cosmological constant Λ and w.

. This shows us the underlying relationship between the cosmological constant Λ and w.

From the above equation (6) we can see that for w < -1/3, the expansion of the universe will continue to accelerate. A fixed cosmological constant corresponds to w = -1, which we can see as follows: The cosmological constant has negative pressure equal to its energy density and so causes the expansion of the universe to accelerate if it is already expanding, and vice versa. This is because energy must be lost from inside a container (the container must do work on its environment) in order for the volume to increase. Specifically, a change in volume dV requires work done equal to a change of energy −p.dV, wherepis the pressure. But the amount of energy in a container full of vacuum actually increases when the volume increases, because the energy is equal to ρV, where ρ is the energy density of the cosmological constant. Therefore, p is negative and p = −ρ.

Phantom Dark Energy: Hypothetical phantom energy would have an equation of state w < -1. It possesses negative kinetic energy, and predicts expansion of the universe in excess of that predicted by a cosmological constant, which leads to a Big Rip. The expansion rate becomes infinite in finite time, causing the expansion to accelerate without bounds, passing the speed of light (since it involves expansion of the universe itself, not particles moving within it), causing more and more objects to leave our observable universe faster than its expansion, as light and information emitted from distant stars and other cosmic sources cannot "catch up" with the expansion. As the observable universe expands, objects will be unable to interact with each other via fundamental forces, and eventually the expansion will prevent any action of forces between any particles, even within atoms, "ripping apart" the universe. One estimate puts the destruction times of the Milky Way at -60mya, solar system -3 months, Earth -30 min and atoms at 10-19 sec as smaller and smaller scales become overwhelmed (fig 8). The value of w has been constrained by Planck in 2013 and 2015 to be w = -1.13 ± 0.13 and w = -1.006 ± 0.045 respectively, and the value from WMAP9 is w = -1.084 ± 0.063, suggesting a possible big-rip (arXiv: 1708.06981, arXiv:1502.01589, arXiv:1212.5226, arXiv:1409.4918).

One application of phantom energy involves a cyclic model of the universe (arXiv:hep-th0610213), in which dark energy with w < -1 equation of state leads to a turnaround at a time, extremely shortly before the would-be Big Rip, at which both volume and entropy of our universe decrease by a gigantic factor, while very many independent similarly small contracting universes are spawned.

One theory attaches the turning on of dark energy part way through the expansion to new types of string-theory related axions (arXiv:1409.0549). Another to an additional scalar field that operates in a see-saw mechanism with the grand unification energy of the Higgs particle (doi:10.1103/PhysRevLett.111.061802) gaining a very small energy in inverse relation to the Higgs energy. The see-saw mechanism is used to model small neutrino masses. Yet another ascribes it to 'dark magnetism' - primordial photons with wavelength greater than the universe (arxiv.org/abs/1112.1106).

Unimodular gravity and Mass-Energy Leakage: Dark energy could come about because the total amount of energy in the universe isn't conserved, but may gradually disappear. Dark energy could be a new field, a bit like an electric field, that fills space. Or it could be part of space itself - a pressure inherent in the vacuum - called a cosmological constant. Quantum mechanics suggests the vacuum itself should fluctuate imperceptibly. In general relativity, those tiny quantum fluctuations produce an energy that would serve as the cosmological constant. Yet, it should be 120 orders of magnitude too big - big enough to obliterate the universe. General relativity assumes a mathematical symmetry called general covariance, which says that no matter how you label or map spacetime coordinates - i.e. positions and times of events - the predictions of the theory must be the same. That symmetry immediately requires that energy and momentum are conserved. Unimodular gravity possesses a more limited version of that mathematical symmetry and quantum fluctuations of the vacuum do not produce gravity or add to the cosmological constant, which can be set to the desired value. If one allows the violation of the conservation of energy and momentum, it can set the value of the cosmological constant. Dark energy thus keeps track of how much energy and momentum has been lost over the history of the universe (arXiv:1604.04183, doi:10.1126/science.aal0603).

A thermodynamic interpretation of dark energy has also been proposed. Carroll and Chatwin-Davies (arxiv.org/abs/1703.09241) take a definition of entropy which uses a quantum mechanical description of space-time to calculate what happens to the geometry of space-time as it evolves. Once a universe has reached peak entropy it is effectively one described by de Sitter geometry. In the 1980s, Robert Wald showed that a universe with a positive cosmological constant will end up as a flat, empty, featureless void known as de Sitter space and Tom Banks suggested then that the value of dark energy could be related to the entropy of space-time. This thermodynamic way of thinking turns the standard view of dark energy on its head: dark energy emerges from the quantum structure of space-time and then drives the accelerated expansion. Solving the mystery of dark energy's value then becomes a case of justifying the choice of a particular quantum mechanical description of space-time..

Dark matter is likewise poorly understood. There are four basic candidates, axions, machos (non-luminous, small stars, black holes etc) and wimps (weakly interacting massive particles which might emerge from extensions of the standard model), complex dark matter experiencing strong self-interactions, while intercting with normal matter only through gravity.

A model of dark matter also being the source of dark energy, through repulsive magnetic effects, has also been proposed (Loeve, Nielsen & Hansen 2021).

In terms of WIMPs, the most sensitive dark matter detector in the world is Gran Sasso's XENON1T, which looks for flashes of light created when dark matter interacts with atoms in its 3.5-tonne tank of extremely pure liquid xenon. But the team reported no dark matter from its first run. As of May 2018 the larger second run reported likewise. Neither was there any signal in data collected over two years during the second iteration of China's PandaX experiment, based in Jinping in Sichuan province. Hunts in space have also failed to find WIMPs, and hopes are fading that a once-promising γ-ray signal detected by NASA's Fermi telescope from the centre of the Milky Way (see fig 11) was due to dark matter — more-conventional sources seem to explain the observation. There has been only one major report of a dark-matter detection, made by the DAMA collaboration at Gran Sasso, but no group has succeeded in replicating that highly controversial result, although renewed attempts to match it are under way (Gibney 2017). In 2018 DAMA announced new results confirming the effect with new detectors. However, the upgrade has made it sensitive to lower-energy collisions. For typical dark-matter models, the timing of the fluctuations, as seen from Earth, should reverse below certain energies. The latest results don't show that. Furthermore, the COSINE-100 experiment which also uses sodium iodide crystals as does DAMA has seen no effect. LUX the Large Underground Xenon in South Dakota also reported no sign (Aron 2016). The latest round of results seem to rule out the simplest and most elegant supersymmetry-based wimp theories, leaving open the possibility of axions, or a hidden sector of particles interacting more feebly, or not at all, with normal matter.

Interest has also converged on a link between dark matter and anti-matter, in which axions interact differently with anti-matter, leading to a possible explanation of both dark matter and the preponderance of matter over anti-matter (Carosi G. 2019 Nature 575 293-4 doi:10.1038/d41586-019-03431-5). Significantly, because axions are bosons and can cohabit, the constraints on their possible masses are much less confining than other dark matter candidates. Such approaches include the notion of "quark nuggets" massive collections e.g. of anti-quarks surrounded by an envelope of axions which maintain their stability and shield their interaction with external ordinary matter, so that almost no interaction occurs (Quark nuggets of wisdom , Cosmic-ray detector might have spotted nuggets of dark matter).

The simplest model of dark matter portrays it as a single particle - one that happens to interact with others of its kind and normal matter very little or not at all. Physicists favor the most basic explanations that fit the bill and add extra complications only when necessary, so this scenario tends to be the most popular. For dark matter to interact with itself requires not only dark matter particles but also a dark force to govern their interactions and dark boson particles to carry this force. This more complex picture mirrors our understanding of normal matter particles, which interact through force-carrying particles. Self-interacting dark matter with dark forces and dark photons may not be as simple as the single-particle explanation but it is just as reasonable an idea.

A 2018 study of four colliding galaxies in the galaxy cluster Abell 3827 for the first time suggests that the dark matter in them may be interacting with itself through some unknown force other than gravity that has no effect on ordinary matter. The dark matter in Abell 3827 is plentiful, so it warps the space around it significantly. The scientists found that in at least one of the colliding galaxies the dark matter in the galaxy had become separated from its stars and other visible matter by about 5,000 light-years. One explanation is that the dark matter from this galaxy interacted with dark matter from one of the other galaxies flying by it, and these interactions slowed it down, causing it to separate and lag behind the normal matter (doi:10.1038/nature.2015.17350).

D-star hexaquark Bose-Einstein condensate: When six quarks combine, this creates a type of particle called a dibaryon, or hexaquark. The d-star hexaquark, described in 2014, ududud made of six light quarks -- 3 u-quarks and 3 d-quarks, was the first non-trivial hexaquark detection. Because they are bosons, at close to absolute zero they could form Bose-Einstein condensates which might remain stable and form dark matter without having to extend the standard model (Bashkanov & Watts 2020). During the earliest moments after the Big Bang, as the cosmos slowly cooled, stable d*(2830) hexaquarks could have formed alongside baryonic matter, and the production rate of this particle would have been sufficient to account for the 85% of the Universe's mass that is believed to be Dark Matter. Calculations have shown that the H dibaryon udsuds, which could result from the combination of two uds hyperons, is light and (meta)stable and takes more than twice the age of the universe to decay.

Dark Negative Energy: A new theory unifies dark matter and dark energy into a single phenomenon: a fluid which possesses negative mass accompanied by negative gravity. To avoid this rapidly diluting itself a 'creation tensor," which allows for negative masses to be continuously created. It demonstrates that under continuous creation, this negative mass fluid does not dilute during the expansion of the cosmos and appears to be identical to dark energy. It also provides the first correct predictions of the behaviour of dark matter halos. Their computer simulation, predicts the formation of dark matter halos just like the ones inferred by observations using modern radio telescopes (Farnes J (2018) Astronomy & Astrophysics arXiv:1712.07962).

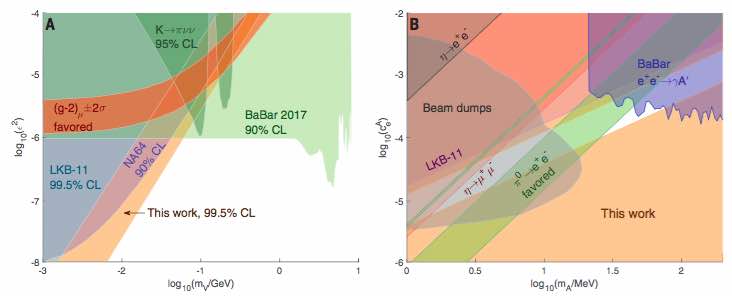

Dark Sector Theories: There are further searches under way for lighter dark matter candidates such as the dark photon, using very intense rays of lower energy particles. Complex dark matter, or the dark sector was first suggested in 1986 (Holdom B 1986 Phys. Lett. B 166,196-198), but remained largely unexplored until a group of theorists resurrected the theory (Arkani-Hamed B et al. 2009 Phys. Rev. D 79, 015014), in light of results from a 2006 satellite mission called PAMELA (Payload for Antimatter Matter Exploration and Light-nuclei Astrophysics), which had observed a puzzling excess of positrons in space. Two nearby pulsars, Geminga and PSR B0565+14, were identified as possible sources, however an international team using the High-Altitude Water Cherenkov Gamma-ray Observatory has measured the positrons emanating from these and found it couldn't account for the surplus reaching Earth. Theorists suggested that they might be spawned by dark-matter particles annihilating each other, but the weakly interacting massive particles (WIMPs) most often suggested would have also decayed into protons and antiprotons, which weren't seen by PAMELA. Another motivation came from a result reported in 2004 that found that the magnetic moment created by the spin and charge of the muon did not match the predictions of the standard model thus suggesting a supersymmetriy explanation. This experimental anomaly, called the muon g-2, could also be rectified by a dark-sector force. However theoretical paper (arXiv:1801.10244) now suggests the effect may be purely due to gravitational space-time effects of the Earth on the relativistic high-energy muons. Theoretical sugestions for dark matter collapse to form additional hidden galactic structures (doi:10.1103/PhysRevLett.120.051102) and dark fusion (doi:10.1103/PhysRevLett.120.221806) have also been proposed.

The most recent cosmological data including the cosmic microwave background radiation anisotropies from Planck 2015, Type Ia supernovae, baryon acoustic oscillations, the Hubble constant and redshift-space distortions show that the interaction in the dark sector parameterized as an energy transfer from dark matter to dark energy is strongly suppressed by the whole updated cosmological data. On the other hand, an interaction between dark sectors with the energy flow from dark energy to dark matter is proved in better agreement with the available cosmological observations (arXiv:1605.04138).

Fig 9: A variety of searches are underway for lighter dark matter candidates. Log-log plots vertical axis relative interaction strength horizontal GeV from 0.01 to 1.

High precision measurements of the fine structure constant, fig 9 right, (Science 360/6385 191-195 doi: 10.1126/science.aap7706) have added further constraints the all but eliminte a dark photon but do favour a dark axial vector boson including the relaxion mass and reaction strength as in the central green triangle (arXiv:1708.00010).

There are two qualitatively different types of neutron life-time measurements. In the bottle method, ultracold neutrons are stored in a container for a time comparable to the neutron lifetime. The remaining neutrons that did not decay are counted and fit to a decaying exponential, exp(-t/τn). The average from the five bottle experiments included in the Particle Data Group (PDG) is 879.6 ± 0.6 s. In the beam method, both the number of neutrons N in a beam and the protons resulting from β decays are counted, and the lifetime is obtained from the decay rate, dN/dt = -N/τn. This yields a considerably longer neutron lifetime; the average from the two beam experiments included in the PDG average is 888.0 ± 2.0 s. The discrepancy between the two results is 4.0 σ. A possible explanation arises from the neutron having a second decay path into the dark sector. This path violates baryon number and generically gives rise to proton decay via the neutron followed by its alternate decay, can be eliminated from the theory if the sum of masses of particles in the minimal final state of the neutron decay process is larger than mp-me. On the other hand, for the neutron to decay, its mass must be smaller than the neutron mass, setting prospective bounds on the dark particle mass (Fornal & Grinstein 2017). However recent evidence from the UCNtau team claims to have ruled out the presence of the telltale gamma rays with 99 percent certainty (arXiv:1802.01595).

Modified Newtonian Dynamics (MOND) attempts avoid the need for dark matter by modifying gravity to account for the observed high velocities of stars around the galaxy by amending Newton's Second Law so that gravity is proportional to the square of the acceleration instead of the first power, so that it varies inversely with galactic radius (as opposed to the inverse square) at extremely small accelerations, characteristic of galaxies, yet far below anything typically encountered in the Solar System or on Earth. However, MOND and its relativistic generalisations such as TeVeS do not adequately account for observed properties of galaxy clusters, and no satisfactory cosmological model has been constructed. Furthermore TeVeS and another class of so-called Galileon theories which introduce scalar and/or vector fields which decay more slowly, have been decisively disproved by the LIGO neutron star collision because this proved gravitational waves travel at the speed of light, inconsistent with these theories.

Fig 10: EG and it's experimental test: (a) Two forms of long range entanglement connecting bulk excitations that carry the positive dark energy either with the states on the horizon or with each other. (b) In anti-de-Sitter space (left) the entanglement entropy obeys a strict area law and all information is stored on the boundary. In de-Sitter space (right) the information delocalizes into the bulk volume and creates a memory effect in the dark energy medium by removing the entropy from an inclusion region. (c) The ESD profile predicted by EG for isolated central galaxies, both in the case of the point mass approximation (dark red, solid) and the extended galaxy model (dark blue, solid), compared with observed values. The difference between the predictions of the two models is comparable to the median 1σ uncertainty on our lensing measurements (grey band).

Fig 10: EG and it's experimental test: (a) Two forms of long range entanglement connecting bulk excitations that carry the positive dark energy either with the states on the horizon or with each other. (b) In anti-de-Sitter space (left) the entanglement entropy obeys a strict area law and all information is stored on the boundary. In de-Sitter space (right) the information delocalizes into the bulk volume and creates a memory effect in the dark energy medium by removing the entropy from an inclusion region. (c) The ESD profile predicted by EG for isolated central galaxies, both in the case of the point mass approximation (dark red, solid) and the extended galaxy model (dark blue, solid), compared with observed values. The difference between the predictions of the two models is comparable to the median 1σ uncertainty on our lensing measurements (grey band).

Emergent Gravity (EG) as a Comprehensive Solution: ES is a radical new theory of gravitation developed by Erik Verlinde in 2011 (arXiv:1001.0785), in which he developed from scratch a fundamental theory of how Newtonian gravitation can be shown to arise naturally in a theory in which space is emergent through a holographic scenario similar to the one discussed above in the context of black holes. Gravity is explained as an entropic force caused by changes in the information associated with the positions of material bodies. A relativistic generalization of the presented arguments directly leads to Einstein's equations. The way in which gravity arises from entropy can most easily be visualized in the context of polymer elasticity where a linear polymer which randomly wriggles into a disordered arrangement thermodynamically is pulled out straight, resulting in an elastic force tending to take it back into a disordered configuration. Space is then an emergent property of the holographic boundary and gravitation a consequence of entropy following an area law at the boundary surface, as in black hole entropy (Bekenstein J 1973 Black holes and entropy Phys. Rev. D 7, 2333).

In November 2016 Verlinde (arXiv:1611.02269) extended the theory to make predictions that can explain both dark energy and dark matter as manifestations of entanglement under the holographic scenario. The entanglement is long range and connects bulk excitations that carry the positive dark energy either with the states on the horizon or with each other. Both situations lead to a thermal volume law contribution to the entanglement entropy that overtakes the area law at the cosmological horizon. Due to the competition between area and volume law entanglement the microscopic states do not thermalise at sub-Hubble scales, but exhibit memory effects in the form of an entropy displacement caused by (baryonic) matter. The emergent laws of gravity thus contain an additional 'dark' gravitational force describing the 'elastic' response due to the entropy displacement, which in turn explains the observed phenomena in galaxies and clusters currently attributed to dark matter.

A month later in December 2016 (arXiv:1612.03034), a group of astronomers made a test of the theory using weak gravitational lensing measurements. As noted, in EG the standard gravitational laws are modified on galactic and larger scales due to the displacement of dark energy by baryonic matter. EG thus gives an estimate of the excess gravity (as an apparent dark matter density) in terms of the baryonic mass distribution and the Hubble parameter. The group measured the apparent average surface mass density profiles of 33,613 isolated central galaxies, and compared them to those predicted by EG based on the galaxies' baryonic masses and find that the prediction from EG, despite requiring no free parameters, is in good agreement with the observed galaxy-galaxy lensing profiles. This suggests that a radical revisioning of the relationship between gravity and cosmology could be under way which will transform current attempts at unifying gravitation and quantum cosmology.

Fig 10b: Reaction profile of the protophobic-X

Fig 10b: Reaction profile of the protophobic-X

Protophobic X as a Possible Fifth Force In 2016 a Hungarian team fired protons at thin targets of lithium-7, which created unstable beryllium-8 nuclei that then decayed and spat out pairs of electrons and positrons(arXiv:1504.01527). According to the standard model, physicists should see that the number of observed pairs drops as the angle separating the trajectory of the electron and positron increases. But the team reported that at about 140o, the number of such emissions jumps - creating a 'bump' when the number of pairs are plotted against the angle - before dropping off again at higher angles with 6.8σ significance. This suggests that a minute fraction of the unstable beryllium-8 nuclei shed their excess energy in the form of a new particle with a mass of about 17 MeV, which then decays into an electron-positron pair. They were searching for a dark photon candidate, but subsequently papers suggest a "protophobic X boson". The theorists showed that the data didn't conflict with any previous experiments – and concluded that it could be evidence for a fifth fundamental force (arXiv:1604.07411). Such a particle would carry an extremely short-range force that acts over distances only several times the width of an atomic nucleus. And where a dark photon (like a conventional photon) would couple to electrons and protons, the new boson would couple to electrons and neutrons. Experimental resolution of this anomaly should be forthcoming within a year. The DarkLight experiment at the Jefferson Laboratory is designed to search for dark photons with masses of 10–100 MeV, by firing electrons at a hydrogen gas target. Now it will target the 17-MeV region as a priority, and could either find the proposed particle or set stringent limits on its coupling with normal matter (doi:10.1038/nature.2016.19957). Indeed, a new transition has now been found in helium-4 (arXiv:1910.10459) with 7.2σ significance.

Extended SM with Dark Matter Inducing Lepton Flavour Violation: A further theory, which is in-principle testable at the LHC (Arcadi G et al. 2018 Lepton flavor violation induced by dark matter doi:10.1103/PhysRevD.97.075022), is one in which the standard model is extended by including dark matter leptons extending the SU(2) of the SM electroweak flavours to a second SU(3) symmetry comprised of an electron, electron neutrino, and neutral fermion later to be identified as dark matter. This multiplet structure is replicated among the three generations to embed the SM fermionic content. Therefore, we have three neutral Dirac fermions, the lightest one being a dark matter candidate, which might induce lepton flavor violation (LFV) decays μ → eγ and μ → eee as well as μ − e conversion. This theoretical arrangement may be albe to show that one may have a viable dark matter candidate yielding flavor violation signatures that can be probed in upcoming experiments. Keeping the dark matter mass at the TeV scale, a sizable LFV signal is possible, while reproducing the correct dark matter relic density and meeting limits from direct-detection experiments.

Fig 11: Waxing and waning of prospects of galactic centre gamma ray source from dark matter: Lower left: Dark matter illuminated: The bullet cluster, two colliding galaxies 3.4 billion light-years away, have total mass far less than the mass of the cluster's two clouds of hot x-ray emitting gas (red). The blue hues show the distribution of dark matter in the cluster with far more mass than the gas. Otherwise invisible, the dark matter was mapped by observations of gravitational lensing of background galaxies. Unlike the gas, the dark matter seems to have passed right through indicating little interaction with itself or other matter although obersvations of galaxy cluster Abell 3827 sugests a possible dark force interaction consistent with complex dark matter. Top and right: False colour view of excess x-ray emissions from the centre of our galaxy suggesting a dark matter particle with mass ranging from around 10 GeV at possible LHC energies upwards. The 1-3 GeV signal is in good fit with a 36-51 GeV dark matter particle annihilating to bb. However in 2018 a group of astronomers has concluded that this radiation comes from 10 billion year old stars in the galactic bulge close the the black hole (doi:10.1038/s41550-018-0414-3). The angular distribution of the excess is approximately spherically symmetric and centered around the dynamical center of the Milky Way (within 0.05o of Saggitarius A* the central black hole), showing no sign of elongation along the Galactic Plane, which would be expected with a pulsar distribution. The signal is observed to extend to at least 10o from the Galactic Center, disfavoring the possibility that this emission originates from millisecond pulsars. The shape of the gamma-ray spectrum from millisecond pulsars appears to be significantly softer than that of the gamma-ray excess observed from the Inner Galaxy (ArXiv: 1402.6703). Earthbound dark matter detectors have also caught events consistent with this mass range (doi:10.1038/521017a). However a survey of wider regions of the Milky Way also show similar peaks, implying this is not sourced only in the galactic center (rXiv:1704.03910), shedding doubt on the notion of a central source of dark matter decay. The Alpha Magnetic Spectrometer on board the International Space Station has also detected more positrons than expected which could be the result of dark matter being annihilated, but might also be caused by nearby pulsars. However the idea of a disk of dark matter coplanar with the disc of baryonic matter has received a blow from lack of experimental verification in a search for stellar kinematics of a thin disk using the Gaia satellite (arXiv:1711.03103). More recently estimates of the gamma ray distribution find it is closer to galactic stellar distributions than that of assumed dark matter (doi:10.1038/s41550-018-0531-z).

Fig 11: Waxing and waning of prospects of galactic centre gamma ray source from dark matter: Lower left: Dark matter illuminated: The bullet cluster, two colliding galaxies 3.4 billion light-years away, have total mass far less than the mass of the cluster's two clouds of hot x-ray emitting gas (red). The blue hues show the distribution of dark matter in the cluster with far more mass than the gas. Otherwise invisible, the dark matter was mapped by observations of gravitational lensing of background galaxies. Unlike the gas, the dark matter seems to have passed right through indicating little interaction with itself or other matter although obersvations of galaxy cluster Abell 3827 sugests a possible dark force interaction consistent with complex dark matter. Top and right: False colour view of excess x-ray emissions from the centre of our galaxy suggesting a dark matter particle with mass ranging from around 10 GeV at possible LHC energies upwards. The 1-3 GeV signal is in good fit with a 36-51 GeV dark matter particle annihilating to bb. However in 2018 a group of astronomers has concluded that this radiation comes from 10 billion year old stars in the galactic bulge close the the black hole (doi:10.1038/s41550-018-0414-3). The angular distribution of the excess is approximately spherically symmetric and centered around the dynamical center of the Milky Way (within 0.05o of Saggitarius A* the central black hole), showing no sign of elongation along the Galactic Plane, which would be expected with a pulsar distribution. The signal is observed to extend to at least 10o from the Galactic Center, disfavoring the possibility that this emission originates from millisecond pulsars. The shape of the gamma-ray spectrum from millisecond pulsars appears to be significantly softer than that of the gamma-ray excess observed from the Inner Galaxy (ArXiv: 1402.6703). Earthbound dark matter detectors have also caught events consistent with this mass range (doi:10.1038/521017a). However a survey of wider regions of the Milky Way also show similar peaks, implying this is not sourced only in the galactic center (rXiv:1704.03910), shedding doubt on the notion of a central source of dark matter decay. The Alpha Magnetic Spectrometer on board the International Space Station has also detected more positrons than expected which could be the result of dark matter being annihilated, but might also be caused by nearby pulsars. However the idea of a disk of dark matter coplanar with the disc of baryonic matter has received a blow from lack of experimental verification in a search for stellar kinematics of a thin disk using the Gaia satellite (arXiv:1711.03103). More recently estimates of the gamma ray distribution find it is closer to galactic stellar distributions than that of assumed dark matter (doi:10.1038/s41550-018-0531-z).

Gravitational Waves: Confirmation of the existence of gravitational waves came in 2016 with the detection of the 'chirp' at two widely space detectors (fig 12) in the ground-breaking LIGO experiment, which can detect minute gravitational fluctuations in a change in the 4 km laser mirror spacing of less than a ten-thousandth the charge diameter of a proton, equivalent to measuring the distance to Proxima Centauri with an accuracy smaller than the width of a human hair. This characteristic "chirp" signal is believed to be due to the last throes of two colliding black holes in a death spiral. An alternative explanation to a coalescing black hole is a gravastar.

Since then, gravitational waves have also been detected, along with a burst of gamma rays, from a pair of colliding neutron stars (fig 12), demonsrating both gravity and electromagnetism are transmitted at the speed of light eliminating theories where the speed of gravity is modified to be slower or faster than light. These coincident signals have enabled a much more detailed picture of neutron stars to emerge. It generated gravitational waves, picked up by LIGO, and Virgo - that lasted an astounding 100 seconds. Less than two seconds later, a NASA satellite recorded a burst of gamma rays. In the wake of the collision, the churning residue forged gold, silver, platinum and a smattering of other heavy elements such as uranium. Such elements' birthplaces were previously unknown, but their origins were revealed by the cataclysm's afterglow. As the collision spurted neutron-rich material into space, a bevy of heavy elements formed, through a chain of reactions called the r-process, which requires an environment crammed with neutrons. Atomic nuclei rapidly gobble up neutrons and decay radioactively, thereby transforming into new elements, before resuming their neutron fest. The r-process is thought to produce about half of the elements heavier than iron (Strickland A (2017) First-seen neutron star collision creates light, gravitational waves and gold CNN, Conover E (2017) Neutron star collision showers the universe with a wealth of discoveries Science News).

Fig 12: Left: Signal of gravitational waves from LIGO believed to be from two collidiing black holes in a binary system, the "chirp" coming from their increasing orbital frequency as they merge. However evidence for the significance of the gamma ray signal at the galactic center has since diminished (arXiv:1704.03910). In October 2017, two neutron stars in a neighbouring galaxy were likewise detected to be colliding from gravitational waves detected by both LIGO and Virgo, but this time because they were not black holes and light could escape, there was a coincident burst of gamma radiation. Such collisions are also believed to provide nearly half the heavy elements such as gold, later swept into planetary systems Right: Light images of the radiation burst of the colliding neutron stars, coincident with a similar gravitational wave chirp, showing its change in radiation over time.

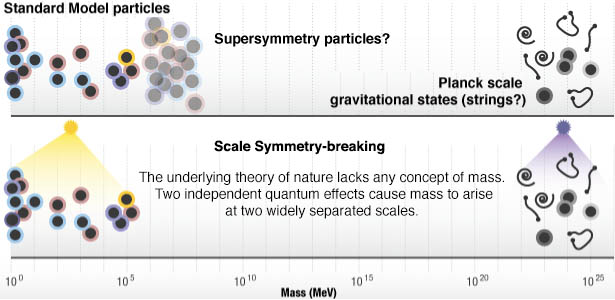

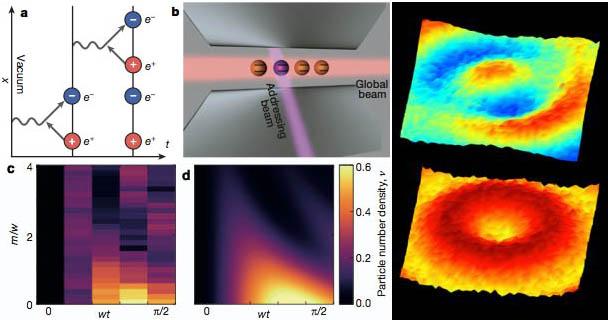

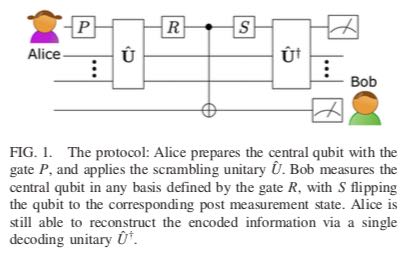

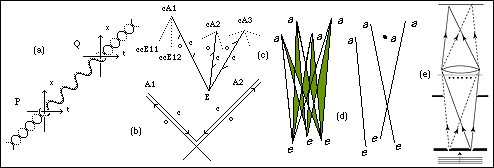

Fig 12: Left: Signal of gravitational waves from LIGO believed to be from two collidiing black holes in a binary system, the "chirp" coming from their increasing orbital frequency as they merge. However evidence for the significance of the gamma ray signal at the galactic center has since diminished (arXiv:1704.03910). In October 2017, two neutron stars in a neighbouring galaxy were likewise detected to be colliding from gravitational waves detected by both LIGO and Virgo, but this time because they were not black holes and light could escape, there was a coincident burst of gamma radiation. Such collisions are also believed to provide nearly half the heavy elements such as gold, later swept into planetary systems Right: Light images of the radiation burst of the colliding neutron stars, coincident with a similar gravitational wave chirp, showing its change in radiation over time.